Key Takeaways

- AI accelerates research at scale, but speed without oversight can create compounding errors that undermine output quality.

- Human-in-the-loop supervision is not a workaround for AI's limitations; it is a strategic control layer that makes AI-driven research defensible and trustworthy.

- Common AI failure points in research, such as hallucinations, bias, and incomplete data retrieval, require human judgment as the final check.

- As AI adoption grows, organizations that embed human oversight into their workflows will produce research that is faster and more reliable than those that do not.

Share this post:

Subscribe:

Artificial intelligence is transforming research by enabling the rapid generation of data, insights, predictions, and hypotheses. The Stanford AI Index 2025 found that 78% of organizations adopted AI in 2024. However, as AI becomes faster and more autonomous, concerns about the reliability, accuracy, and accountability of its outputs are increasing.

The Stanford AI index also shows that error rates increase as task complexity grows, especially in multi-step reasoning and domain-specific work. Human-in-the-loop (HITL) supervision is essential to mitigate these risks. Human oversight is needed not because AI is slow, but because its speed and scale can amplify minor errors across thousands of actions, leading to significant workflow issues.

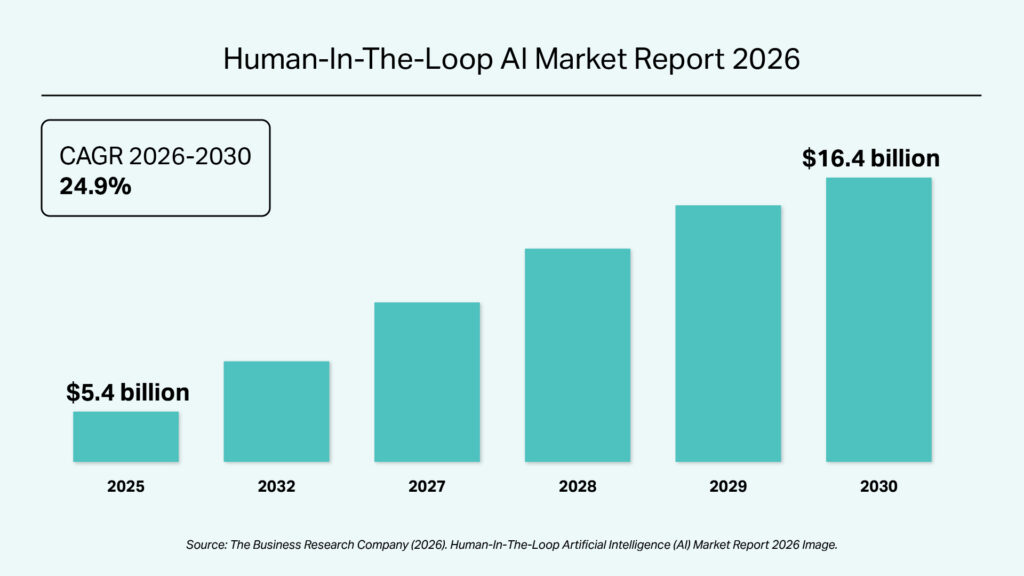

HITL is a critical safeguard, combining human judgment with machine intelligence to ensure research outcomes are trustworthy, defensible, and transparent. Human oversight grounds outputs in real-world context, ethical standards, and domain expertise. Market reports show the global HITL AI market reached USD 5.4 billion in 2025 and is projected to grow at a CAGR of about 25% to USD 16.4 billion by 2030. This investment reflects the need to scale AI responsibly while maintaining accuracy, accountability, and trust.

AI increases research speed and scale but introduces limitations that require human oversight, such as confidently presenting incorrect information, misattributing syndicated content, and inconsistent data retrieval.

Where AI Adds Clear Value

- Speed and scale: AI rapidly processes structured and unstructured data, identifies patterns, extracts entities, and summarizes information faster than manual methods. However, AI tools may struggle with very large PDFs and often fail to distinguish critical from irrelevant information.

- Hypothesis generation: AI identifies correlations and themes to support early-stage hypothesis development and exploration of new perspectives. However, it rarely produces a comprehensive set of hypotheses from a dataset.

- Standardization and consistency: AI enables repeatable frameworks for company profiling, peer benchmarking, taxonomy design, and trend tagging, driving consistency in large-scale research. However, these outputs often lack the contextual intelligence needed for nuanced analysis.

Why Human Oversight Remains Essential

- Opaque blind spots: AI often fails to recognize its own knowledge gaps, producing outputs that appear complete but overlook key information. Human judgment ensures research is thorough.

- Limited auditability: Researchers lack straightforward, reliable methods to audit AI outputs for coverage gaps. Because AI models rely on historical data, they can also reproduce or intensify existing biases in areas such as customer segmentation, competitor profiling, and demand forecasting.

- Paywall and access constraints: AI often lacks access to paywalled or authoritative research. Granting access can increase costs, making human researchers more important when access is restricted.

- Hallucinations: In complex tasks, AI can generate convincing but inaccurate insights. Human reviewers are the final safeguard against unreliable information.

- Autonomous drift: When agentic AI executes long task chains, early errors can escalate into fabricated conclusions. AI optimizes toward a goal but rarely questions its validity, making human checkpoints essential.

While AI offers advantages in speed, scalability, and pattern recognition, it cannot be held accountable for outcomes. Its outputs must be continuously validated, contextualized, and refined through human judgment. Integrating human oversight into the research workflow ensures AI-driven insights remain accurate, defensible, and aligned with real-world business needs.

HITL oversight is a vital control that enables accountability where AI cannot. This approach mitigates risks such as hallucinations, bias, and incomplete data while preserving AI-driven efficiency.

At Integreon, our research teams operate this way. Our analysts work alongside AI tools to manage the volume and speed modern business research demands, while applying domain expertise and critical judgment to ensure outputs are usable. The result is research that is both faster to produce and reliable enough to act on.

Rather than viewing HITL as an operational constraint, treat it as a strategic control layer that enhances trust, accountability, and quality in research outcomes. As AI adoption grows, this balanced approach will deliver high-quality, reliable, and ethically grounded business research in an increasingly complex data landscape.

Want to see how this works in practice?

Explore our Research & Business Intelligence services or contact us to talk through your research needs.

Get in touch